This video illustrates deep learning based audio-video late fusion for speaker detection.

|

This video illustrates head pose estimation based on RGB-D sensor and offline key-frames learning.

|

This video illustrates video-based human action recognition when combining Bayesian and CNN inferences.

|

This video illustrates real time people tracking based on sparse representations and tracklet inference.

|

This video illustrates real time 3D human motion tracking based on synchronized multi-Kinect sensors.

|

This video illustrates real time people detection with a 360° perspective camera.

|

This video illustrates our multimodal interface for human-robot interaction (HRI). Speech and gesture interpretation are fused in a late stage strategy.

|

This video illustrates the tracking of person thanks to an active perception system composed of a camera mounted on a pan-tilt unit and a 360° RFID detection system (bottom right image). Both hardware and software parts are embedded on a mobile robot in a human cluttered environment. The particle filtering framework enables the fusion of such heteregenous data.

|

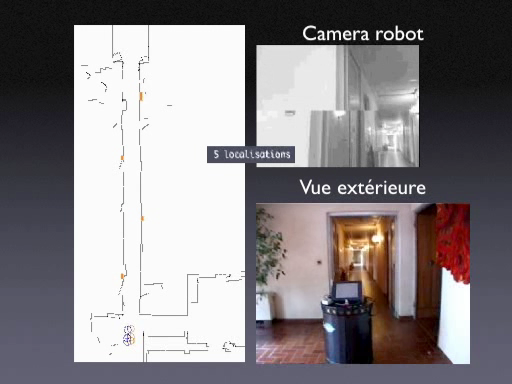

This video illustrates the Diligent robot navigating in corridors thanks to visual functions dedicated to the extraction and recognition of visual landmarks, here planar landmarks detected by a onboarded camera. The extracted landmarks are characterized by invariant attributes so that recognition is made possible from a large range of viewpoint.

|